AI in higher education · 2026-04-18 · ~9 min read

The standard story about Indian AI talent has two halves, and they contradict each other.

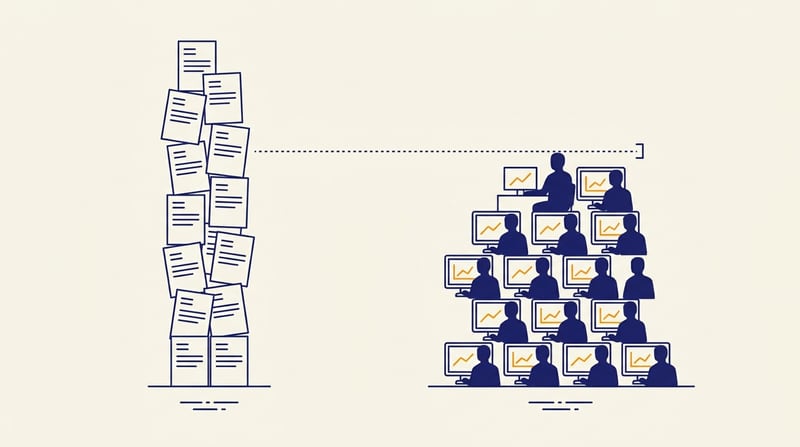

- India produces more engineering graduates than any other country in the world, and the largest single share of the global tech workforce. By that account, India should be the most over-supplied AI talent market on earth.

- Every Indian AI hiring manager you talk to says they cannot fill their open requisitions, the candidates they do interview are weak, and salaries for actually-capable AI engineers in Bengaluru are now within shouting distance of their Bay Area equivalents. (Senior GenAI engineers at Bengaluru product companies routinely clear ₹35–80 LPA, with remote-for-US roles pushing past ₹60–80 LPA — a ~9× gap on the equivalent US comp has narrowed sharply over the past three years.1)

Both halves are true. Reconciling them is the work.

What the gap actually is

Reframe the gap from quantity to capability

A useful framing: the gap is not a quantity gap. It is a gap between the number of people described as AI engineers on resumes and the number of people who can demonstrably do AI engineering work.

The gap is not a shortage of resumes. It is a shortage of demonstrable capability behind those resumes.